Salman Khan's Email & Phone Number

Senior Consultant | Federal Health Care Practice| Information Management

Salman Khan Email Addresses

Salman Khan's Work Experience

Senior Consultant | Federal Health Care| Information Management (Full Time)

December 2014 to September 2018

Boeing

Senior Informatica Consultant

June 2012 to June 2013

Show more

Show less

Salman Khan's Education

TKR Institute of Management & science

January 2001 to January 2003

Show more

Show less

Frequently Asked Questions about Salman Khan

What is Salman Khan email address?

Email Salman Khan at [email protected] and [email protected]. This email is the most updated Salman Khan's email found in 2024.

What is Salman Khan phone number?

Salman Khan phone number is +1.4439859368.

How to contact Salman Khan?

To contact Salman Khan send an email to [email protected] or [email protected]. If you want to call Salman Khan try calling on +1.4439859368.

What company does Salman Khan work for?

Salman Khan works for Confidential

What is Salman Khan's role at Confidential?

Salman Khan is Sr. Developer

What industry does Salman Khan work in?

Salman Khan works in the Information Technology & Services industry.

Salman Khan's Professional Skills Radar Chart

Based on our findings, Salman Khan is ...

What's on Salman Khan's mind?

Based on our findings, Salman Khan is ...

Salman Khan's Estimated Salary Range

Salman Khan Email Addresses

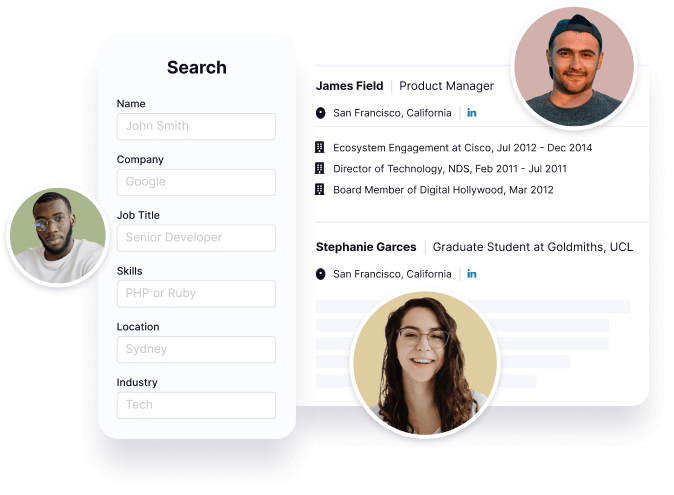

Find emails and phone numbers for 300M professionals.

Search by name, job titles, seniority, skills, location, company name, industry, company size, revenue, and other 20+ data points to reach the right people you need. Get triple-verified contact details in one-click.In a nutshell

Salman Khan's Ranking

Ranked #211 out of 4,227 for Sr. Developer in Maryland

Salman Khan's Personality Type

Introversion (I), Sensing (S), Thinking (T), Perceiving (P)

Average Tenure

2 year(s), 0 month(s)

Salman Khan's Willingness to Change Jobs

Unlikely

Likely

Open to opportunity?

There's 100% chance that Salman Khan is seeking for new opportunities

Salman Khan's Social Media Links

/in/salmanrkhan /company/kaiser-permanente