Hugo Louro's Email & Phone Number

Sr. Software Engineer at AppDynamics

Hugo Louro Email Addresses

Hugo Louro Phone Numbers

Hugo Louro's Work Experience

RealFevr

Streaming Data Architect

May 2018 to Present

TIBCO Software Inc.

Senior Software Engineer

February 2008 to July 2013

Visiting Scholar @ Stanford Mood and Anxiety Disorders Laboratory

January 2008 to January 2010

Research Associate @ Translational and Developmental Neuroscience Laboratory

January 2006 to January 2008

Research Fellow @ Communications and Signal Processing Laboratory

January 2004 to January 2006

Show more

Show less

Hugo Louro's Education

University of Michigan

PhD student, MSE in Electrical Engineering. Major: Signal Processing, Machine Learning

Instituto Superior Técnico

BSE, MSE in Electrical Engineering and Computer Science (Summa Cum Laude)

Show more

Show less

Frequently Asked Questions about Hugo Louro

What is Hugo Louro email address?

Email Hugo Louro at [email protected] and [email protected]. This email is the most updated Hugo Louro's email found in 2024.

How to contact Hugo Louro?

To contact Hugo Louro send an email to [email protected] or [email protected].

What company does Hugo Louro work for?

Hugo Louro works for AppDynamics

What is Hugo Louro's role at AppDynamics?

Hugo Louro is Senior Software Engineer

What is Hugo Louro's Phone Number?

Hugo Louro's phone (**) *** *** 135

What industry does Hugo Louro work in?

Hugo Louro works in the Computer Software industry.

Hugo Louro's Professional Skills Radar Chart

Based on our findings, Hugo Louro is ...

What's on Hugo Louro's mind?

Based on our findings, Hugo Louro is ...

Hugo Louro's Estimated Salary Range

Hugo Louro Email Addresses

Hugo Louro Phone Numbers

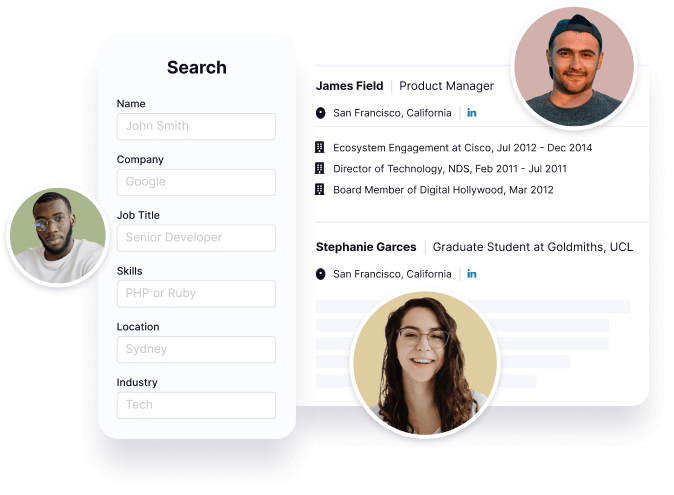

Find emails and phone numbers for 300M professionals.

Search by name, job titles, seniority, skills, location, company name, industry, company size, revenue, and other 20+ data points to reach the right people you need. Get triple-verified contact details in one-click.In a nutshell

Hugo Louro's Personality Type

Introversion (I), Sensing (S), Thinking (T), Perceiving (P)

Average Tenure

2 year(s), 0 month(s)

Hugo Louro's Willingness to Change Jobs

Unlikely

Likely

Open to opportunity?

There's 90% chance that Hugo Louro is seeking for new opportunities

Top Searched People

Australian actress

American basketball player

American singer

American academic

American model and actress

Hugo Louro's Social Media Links

/in/hlouro /school/university-of-michigan/